Test Execution – Complete Guide

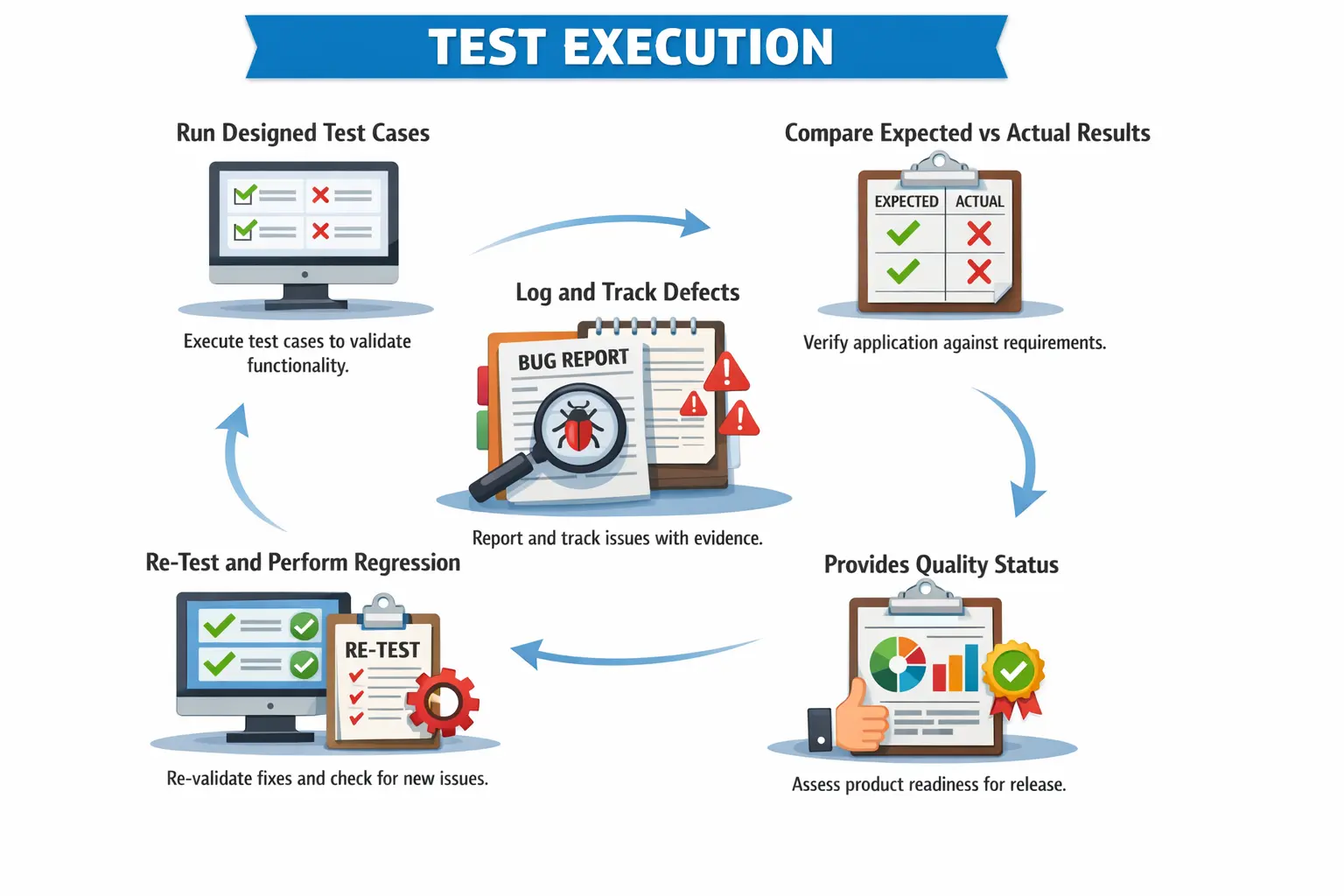

Test Execution is the stage in the Software Testing Life Cycle (STLC) where planning and preparation transform into measurable quality validation. After requirements are analyzed and test cases are designed, the real evaluation of the product begins during execution. This is the phase where the application is actually tested against defined expectations, and defects are uncovered.

Test Execution answers the most practical and decisive question in testing:

“Does the application behave as expected?”

It is during this phase that theoretical validation becomes real evidence. Testers interact with the application, compare actual behavior with expected results, and determine whether the system is ready for release.

For manual testers, test execution is a disciplined and structured activity. It requires focus, precision, observation skills, and strong documentation practices. Poor execution leads to missed defects, incorrect reporting, and unreliable release decisions.

Definition of Test Execution

Test Execution is the process of running approved test cases in a prepared environment, comparing actual results with expected results, and identifying deviations in the form of defects.

It is not simply clicking through the application. Test execution is a structured activity governed by entry criteria, execution strategy, defect management processes, and reporting mechanisms.

During execution, testers validate implemented functionality against documented requirements. If the system behaves as expected, the test case is marked as passed. If it does not, a defect is logged.

Test execution transforms test design artifacts into measurable outcomes. It provides visibility into product quality and stability.

Without effective test execution, test planning and test design have no value.

Purpose of Test Execution

The primary purpose of test execution is to validate implemented functionality against requirements. Every executed test case verifies whether the application meets defined expectations.

Test execution identifies defects and quality risks. Even well-designed systems may contain logic flaws, integration gaps, or validation errors.

It provides evidence of testing. Execution records, defect logs, and status reports demonstrate that validation activities were performed.

Test execution supports release decisions. Pass rates, open defect counts, and severity distribution influence Go or No-Go decisions.

Test execution also validates non-functional aspects when applicable, such as usability observations or response behavior.

Ultimately, test execution provides a factual quality assessment of the application.

Preconditions for Test Execution

Effective test execution requires proper preparation. Execution should never begin without meeting defined entry criteria.

Approved and reviewed test cases must be available. Unreviewed or incomplete test cases lead to confusion and inconsistent validation.

The test environment must be ready and stable. An unstable environment can produce misleading results.

The build must be deployed successfully and verified through smoke testing. Execution should not begin on an unstable build.

Test data must be prepared and validated. Missing or incorrect test data blocks execution and wastes time.

Dependencies such as APIs, third-party services, and configurations must be confirmed.

Only when entry criteria are satisfied should execution proceed.

Test Execution Activities – Step by Step

Executing Test Cases

Execution begins by following test steps precisely as documented. Testers must avoid assumptions and deviations unless performing exploratory testing.

Defined test data must be used unless specific exploratory validation is intended.

Each action must be performed carefully to ensure accurate validation.

Execution requires attention to detail. Even small discrepancies may indicate defects.

Discipline during execution prevents false positives and false negatives.

Recording Results

After executing each test case, testers must record the result clearly.

Test cases are typically marked as Pass, Fail, or Blocked.

Actual results must be documented accurately. Clear documentation helps developers reproduce issues.

Incomplete result documentation reduces defect quality.

Accurate result recording ensures reliable reporting and traceability.

Logging Defects

When actual results differ from expected results, a defect must be logged.

Defect reports should include clear steps to reproduce, expected results, actual results, and supporting evidence such as screenshots.

Severity and priority must be assigned correctly based on impact and urgency.

High-quality defect logging improves resolution efficiency.

Poorly written defects delay fixes and increase rejection rates.

Re-Testing Fixes

After developers fix defects, testers re-test them in new builds.

Re-testing verifies whether the defect has been resolved.

If the issue persists, the defect is reopened.

If resolved successfully, the defect is closed.

Re-testing ensures validation of fixes and prevents premature closure.

Regression Testing

Regression testing is a critical part of test execution.

When fixes or changes are implemented, previously working functionality may break.

Regression testing re-executes impacted test cases to ensure system stability.

Risk-based regression prioritizes high-impact areas.

Regression testing prevents defect leakage into production.

Types of Manual Test Execution

Functional test execution validates business rules and system behavior.

Smoke test execution verifies build stability before detailed testing begins.

Sanity test execution validates specific fixes or minor changes.

Regression test execution ensures existing functionality remains unaffected by changes.

Exploratory test execution involves simultaneous learning and testing without predefined steps.

User Acceptance Testing support execution assists business users in validating functionality.

Each execution type serves a distinct purpose within the testing lifecycle.

Manual Tester’s Responsibilities

Manual testers must execute test cases accurately and consistently.

They must maintain discipline during execution to avoid missing defects.

They must log high-quality defects with reproducible steps.

They must update execution status regularly in test management tools.

They must communicate blockers, risks, and environment issues promptly.

Testers must also prioritize high-risk areas when time constraints exist.

Professional execution behavior strengthens testing credibility.

Test Execution vs Test Design

Test Design focuses on preparing validation artifacts.

Test Execution focuses on validating functionality.

Test Design produces test cases and scenarios.

Test Execution produces pass/fail results and defect reports.

Test Design occurs before build availability.

Test Execution begins after build deployment.

Both phases are interconnected and equally important.

Test Execution Deliverables

Executed test cases provide evidence of coverage and validation.

Defect reports document deviations from expected behavior.

Daily or weekly execution reports provide status visibility.

Updated Requirement Traceability Matrix ensures requirement coverage.

Execution summary reports support release decisions.

Deliverables reflect the quality status of the application.

Common Issues During Test Execution

Environment instability is a frequent challenge. Server crashes or configuration issues disrupt execution.

Incomplete test data may block test scenarios.

Frequent build changes require re-validation and slow progress.

Time pressure may force prioritization of critical tests.

Blocked test cases due to dependencies delay coverage.

Managing these challenges requires communication and planning.

Best Practices in Test Execution

Execute tests systematically rather than randomly.

Prioritize high-risk and high-impact areas.

Maintain evidence for failed cases.

Communicate issues immediately rather than delaying reporting.

Follow defined exit criteria before concluding execution.

Track daily progress to avoid last-minute surprises.

Structured execution improves reliability and transparency.

Importance of Documentation During Execution

Documentation ensures traceability and audit readiness.

Execution logs demonstrate compliance with testing processes.

Defect records provide historical reference.

Accurate documentation supports quality metrics and reporting.

Clear records improve stakeholder confidence.

Test Execution in Agile Projects

In Agile environments, test execution occurs continuously within sprints.

Testers validate user stories as they are developed.

Defect turnaround cycles are short.

Regression testing is incremental.

Execution feedback influences backlog prioritization.

Agile test execution emphasizes speed and collaboration.

Risk-Based Test Execution

Not all test cases carry equal importance.

Risk-based execution prioritizes critical business flows.

High-impact scenarios receive early validation.

Low-risk cosmetic issues may be deferred under time constraints.

Risk-based strategies optimize effort without compromising quality.

Interview Perspective

Test execution is a fundamental interview topic for manual testers.

A short answer defines test execution as running test cases and comparing results.

A detailed answer explains environment preparation, defect logging, re-testing, and regression testing.

Interviewers may ask how to handle blocked cases or unstable builds.

Strong execution knowledge reflects practical experience.

Key Takeaway

Test Execution is the phase where designed test cases are run, results are recorded, and defects are identified.

It validates implemented functionality against requirements and provides measurable evidence of quality.

Manual testers must execute systematically, log defects clearly, and perform regression testing thoroughly.

Test Execution converts planning and design into real quality validation, revealing the true state of the product before release.